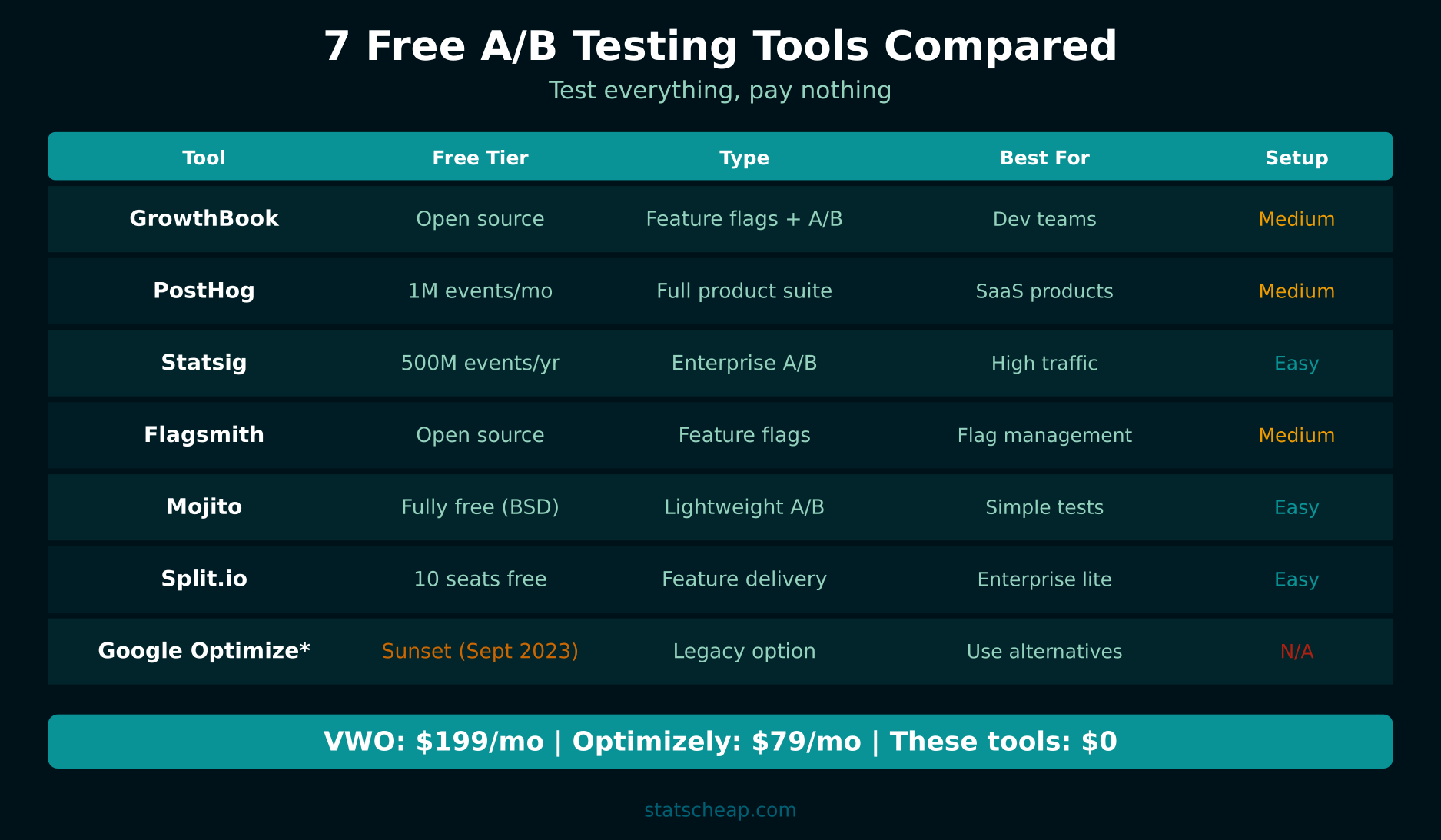

Google Optimize shut down in September 2023, and the A/B testing world hasn’t been the same since. VWO starts at $199/month. Optimizely’s plans begin around $79/month. AB Tasty? Don’t even ask — enterprise pricing means four figures. If you relied on Google Optimize or simply can’t justify spending hundreds per month on testing, you need alternatives. Good news: several free A/B testing tools have stepped in to fill the gap — and some are better than what Google offered.

I’ve tested every free and open-source A/B testing platform I could find over the past year. Most were too complex, too limited, or too buggy for real-world use. The seven tools below are the ones that actually work for small businesses and startups operating on tight budgets.

7 Free A/B Testing Tools That Actually Work

1. GrowthBook — Best Open-Source A/B Testing Platform

Price: Free (open source, self-hosted) or free cloud tier

Best for: Teams with a developer who can handle setup

Platforms: Web, mobile, backend

GrowthBook is the best Google Optimize replacement for technically inclined teams. It’s fully open source, connects to your existing data warehouse (BigQuery, Snowflake, Postgres, Mixpanel), and supports both front-end and back-end experiments. Furthermore, the cloud-hosted free tier includes up to 3 team members with unlimited experiments.

What you get free: Unlimited experiments, feature flags, Bayesian statistics engine, SDK for JavaScript/React/Node/Python/Ruby/Go, integration with your existing analytics. The self-hosted version has no limits at all.

The learning curve is steeper than Google Optimize was, but the capabilities are significantly more powerful. If you have access to a developer for initial setup, GrowthBook is the best long-term solution.

2. PostHog — A/B Testing Plus Product Analytics

Price: Free (1 million events/month)

Best for: Product teams wanting testing and analytics in one tool

Platforms: Web, mobile

PostHog combines A/B testing with product analytics, feature flags, session recordings, and surveys — all with a generous free tier of 1 million events per month. As a result, you get a complete experimentation and analytics platform without paying anything for low to medium traffic sites.

What you get free: A/B testing (1M events), feature flags, product analytics, session recordings (15,000/month), surveys. PostHog calculates statistical significance automatically and provides clear results dashboards.

3. Statsig — Most Generous Free Tier

Price: Free (500 million events per year, then pay-as-you-go)

Best for: Growing businesses that need serious scale

Platforms: Web, mobile, backend

Statsig’s free tier is absurdly generous — 500 million events per year, which covers most businesses well beyond the startup phase. It offers feature gates (feature flags), experiments (A/B tests), and auto-tuning (automatically picks the winning variant). Therefore, for pure volume, no other free tool comes close.

What you get free: 500M events/year, unlimited experiments, feature flags, auto-tune experiments, holdout groups, mutual exclusion groups, statistical analysis with Bonferroni corrections.

4. Flagsmith — Open Source Feature Flags with A/B Testing

Price: Free (open source) or free cloud (50,000 requests/month)

Best for: Teams already using feature flags who want to add testing

Platforms: Web, mobile, backend

Flagsmith is primarily a feature flag platform, but it includes A/B testing capabilities through multivariate flags and percentage rollouts. The open-source version is completely free with no limits. The cloud version’s free tier includes 50,000 API requests per month. In addition, it integrates with analytics tools so you can measure experiment results in your existing dashboards.

What you get free: Feature flags, multivariate testing, percentage rollouts, user segmentation, audit logs. Self-hosted version is unlimited.

5. Mojito — Fully Free A/B Testing (BSD License)

Price: Free forever (BSD license, open source)

Best for: Front-end developers who want full control

Platforms: Web

Mojito is a lightweight, fully open-source A/B testing framework released under the BSD license. It’s designed for developers who want to run experiments without vendor lock-in or usage limits. You host it yourself, write experiments in JavaScript/CSS, and send results to your own analytics. Consequently, there are no event caps, no user limits, and no surprise bills.

What you get free: Unlimited experiments, client-side and server-side testing, split URL testing, works with any analytics backend. The trade-off is that setup requires development skills — there’s no visual editor.

6. Split.io — Enterprise-Grade with a Free Tier

Price: Free (up to 10 seats)

Best for: Small teams at growing companies

Platforms: Web, mobile, backend

Split.io offers feature flags, A/B experiments, and progressive rollouts with a free tier that includes up to 10 seats. It’s built for engineering teams and includes robust targeting rules, metric tracking, and kill switches. However, the free tier is limited in the number of feature flags and experiments you can run simultaneously.

What you get free: 10 seats, feature flags, A/B testing, targeting rules, metric tracking, SDKs for all major languages.

7. Google Optimize (Sunset) — What Happened and Why It Matters

Google Optimize was the most popular free A/B testing tool until Google shut it down in September 2023. It was simple, visual, and integrated perfectly with Google Analytics. Its disappearance left millions of small businesses without a free testing tool — which is exactly why the alternatives above exist. If you’re here because you used Optimize, GrowthBook and PostHog are the closest replacements in terms of ease of use.

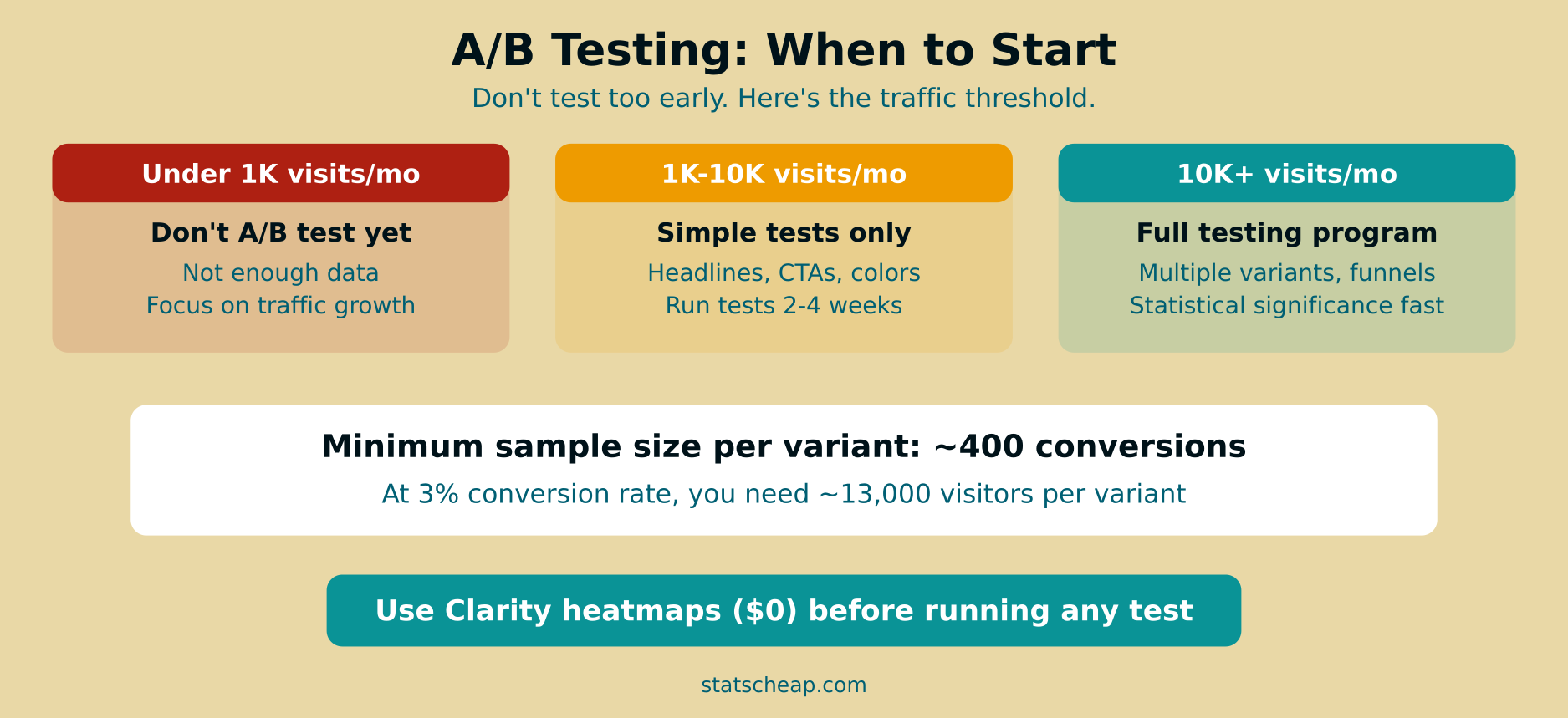

When to Start A/B Testing: Traffic Thresholds

Having a free A/B testing tool means nothing if you don’t have enough traffic to generate statistically significant results. Here’s the reality:

| Monthly Visitors | Testing Strategy | What to Focus On |

|---|---|---|

| Under 1,000 | Don’t A/B test yet | Fix obvious issues, improve content, drive traffic |

| 1,000–10,000 | Simple tests only | Big changes: headlines, CTAs, page layouts |

| 10,000–50,000 | Regular testing program | Systematic testing of all conversion elements |

| 50,000+ | Full experimentation culture | Multiple concurrent tests, micro-optimizations |

The math is straightforward: you need approximately 400 conversions per variant to detect a meaningful difference. If your conversion rate is 3% and you’re running a 50/50 test, you need about 27,000 visitors to reach significance. Therefore, testing with low traffic just gives you random noise, not insights.

What to Test First (For Maximum Impact)

When you’re ready to test, start with the changes most likely to move the needle. Furthermore, focus on pages with the highest traffic and conversion potential:

- Headlines and value propositions: The first thing visitors see. A better headline can double conversion rates.

- Call-to-action buttons: Text, color, size, and placement all matter. Test “Buy Now” vs “Add to Cart” vs “Get Started.”

- Page layouts: Long-form vs short-form, image placement, form length. Big structural changes produce big results.

- Pricing display: How you present prices (monthly vs annual, with or without a crossed-out original price) significantly affects conversions.

- Social proof placement: Where you show testimonials, reviews, and trust badges can change buyer confidence.

Before testing, use Microsoft Clarity heatmaps to identify what’s broken. Heatmaps show you where visitors click, how far they scroll, and where they rage-click. This tells you what to test — and that’s half the battle. See our Clarity vs Hotjar comparison to understand why Clarity is the best free option for this.

Common A/B Testing Mistakes (and How to Avoid Them)

Testing With Too Little Traffic

The most common mistake. Running a test for 3 days with 200 visitors and declaring a winner is not A/B testing — it’s guessing. Wait until you have at least 400 conversions per variant before drawing conclusions. Patience beats premature optimization.

Stopping Tests Too Early

Even with enough traffic, stopping a test the moment one variant looks better leads to false positives. Run tests for at least 2 full business cycles (typically 2-4 weeks) to account for day-of-week and time-of-month variations. Furthermore, many tools show you “winning” variants early that reverse over time.

Testing Too Many Variants at Once

An A/B/C/D/E test splits your traffic five ways, meaning each variant gets only 20% of visitors. With limited traffic, this makes reaching statistical significance nearly impossible. Consequently, stick to A/B (two variants) unless you have significant traffic. Test one change at a time so you know exactly what caused the improvement.

Ignoring Segment Differences

A test might show no overall winner, but one variant could perform significantly better for mobile users while another wins on desktop. Always check results by device, traffic source, and new vs returning visitors. Free tools like GrowthBook and PostHog support this level of segmentation.

Frequently Asked Questions

How long should A/B tests run?

Minimum 2 weeks, ideally 4 weeks, regardless of when you hit statistical significance. This ensures you capture full weekly patterns (weekday vs weekend behavior) and avoid seasonal flukes. Therefore, set a minimum test duration before you launch and don’t peek at results until then.

Can I A/B test with low traffic?

Below 1,000 monthly visitors, traditional A/B testing doesn’t work — you won’t reach statistical significance in a reasonable timeframe. Instead, focus on qualitative research: use Clarity session recordings to watch real visitors, conduct user surveys, and make evidence-based improvements without formal testing. When your traffic grows, start testing.

Is GrowthBook hard to set up?

It depends on your technical background. If you’re comfortable with JavaScript and basic API integrations, setup takes a few hours. If you’ve never worked with SDKs or feature flags, expect a learning curve. However, GrowthBook’s documentation is excellent, and the cloud version simplifies hosting. For non-technical users, PostHog might be easier to start with. Build your results into your weekly analytics report to track testing impact over time.

Your Budget A/B Testing Action Plan

Here’s how to start testing without spending money:

- Check your traffic first. Under 1,000 monthly visitors? Focus on traffic growth, not testing. ($0)

- Install Microsoft Clarity to identify what needs testing via heatmaps and recordings. ($0)

- Choose your tool: GrowthBook for developers, PostHog for all-in-one, Statsig for generous limits. ($0)

- Start with one high-impact test — your homepage headline or main CTA. ($0)

- Run the test for at least 2 weeks. Don’t peek early. ($0)

- Implement the winner, then test something else. Build a continuous testing habit. ($0)

Seven free A/B testing tools, zero excuses. Google Optimize is gone, but better alternatives exist — and they won’t cost you a dime. Start small, test smart, and let data guide your decisions instead of guesswork.